A decade ago, smart phone apps and the virtual/gaming approach were considered as cutting-edge, emerging technologies which held great promise for the advancement of citizen Science.

We have progressed to a state where smart phone apps are being enhanced with a new suite of sensors to enable greater citizen science functionality of the same technologies. Given the image-intensive nature of many ongoing marine citizen science campaigns, the image analyses domains of Artificial Intelligence and Machine Learning (considered as Industry 5.0 components) have increasingly been applied to automate the validation of the same images and thus to expedite the normally lengthy process of species identification and report validation.

A scientific paper recently published within the ‘Applied Sciences’ journal co-authored by Dr Adam Gauci and Prof. Alan Deidun (Department of Geosciences) and Dr John Abela (Department of Computer Information Systems) has brought us one step closer to the automated identification of jellyfish individuals through one’s smart phone.

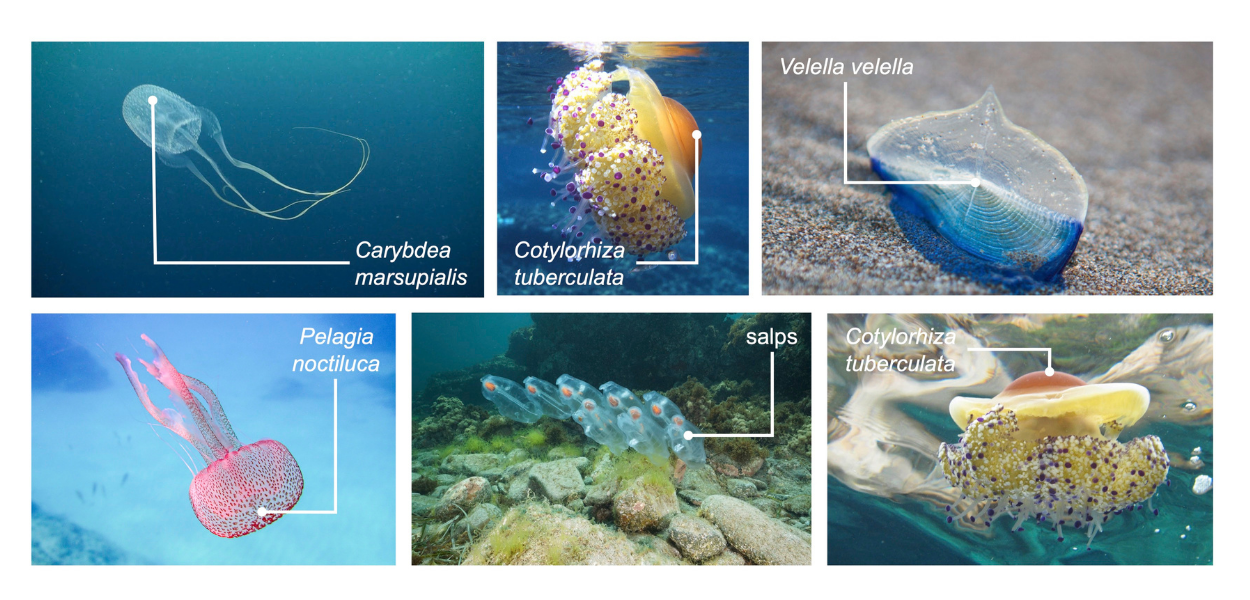

The protocol applied within this study, in fact, availed itself of several hundred photos of five species of jellyfish (Pelagia noctiluca, Cotylorhiza tuberculata, Carybdea marsupialis, Velella velella and salps) out of the thousands of photos submitted by members of the public to the Spot the Jellyfish citizen science campaign since June 2010. These photos were used as a training set to the ad hoc classification algorithm formulated as part of this study.

The same algorithm gave very encouraging results, given that, in many cases (at precision levels frequently exceeding 90%), it managed to correctly classify and identify the jellyfish species shown in submitted photos. Cotylorhiza tuberculata (the fried-egg jellyfish) was the most frequently correctly-identified species, most probably due to its habit of occurring in surface waters, which rendered it more accessible for photography purposes, whilst Carybdea marsupialis (the Mediterranean box jellyfish) scored the lowest concordance values, presumably due to its nocturnal habits and its highly transparent nature, which hindered precision photography.

Once fully implemented, through embedding within a customised app, for instance, the optimised algorithm can serve as a blueprint for citizen science campaigns relying on image analyses. In fact, by facilitating the automated extraction and interpretation of information from submitted images, and thus the report validation process, the same algorithm can make a positive contribution by improving the efficacy and attractiveness of the same campaigns. The same research group previously developed a novel image analysis protocol for the automated characterisation of microplastics, which will be incorporated within a smart phone app within the JPI Oceans Andromeda project.

The full text of the publication in question can be consulted online.